Today, Driver Assistance Systems have made significant progress and several new algorithms have been designed to improve the understanding of environment surrounding the vehicle. Lane detection is one of the key issues of environment understanding. The problem is well-explored with existing approaches using varied sensors namely LIDAR (Light Detection and Ranging), radar and camera.

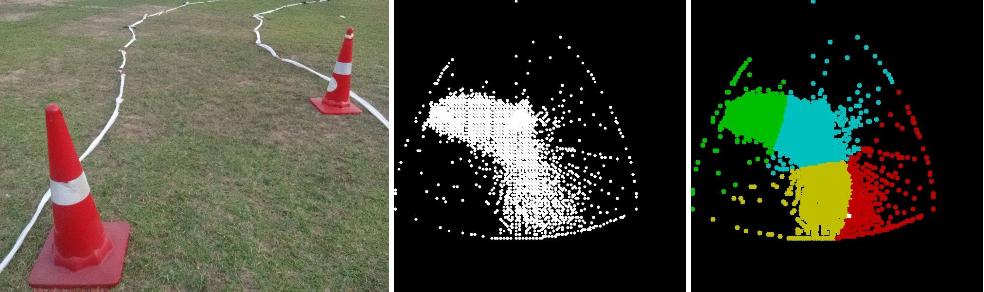

For the annual Intelligent Ground Vehicle Competition (IGVC) lane detection is to be done on grass and the narrower lanes (as compared to roads) present new challenges such as the case of only a single lane remaining visible. For this year’s IGVC we had implemented a support vector machine (SVM) model to detect the lanes.

The SVM Model

The approach followed by us at the Autonomous Ground Vehicle research group had been to train an SVM on a captured image by manually annotating lane and non-lane points and using that model to detect the lanes in the camera feed afterward as described below.

The grassy portions of the image were removed with the SVM (Support Vector Machine) classifier where features for learning were taken as a kernel of an 8×8 ROI of the image. This kernel was classified as grass or non-grass type using a polynomial SVM classifier. The classifier was unable to generate satisfactory results due to the shadows which perturb the HSV values of the regions. Hence, a shadow removal technique was used. To that end, the image was first converted to the YCrCb color space. Then, all the pixels with intensity less than 1.5 times the standard deviation of Y channel were classified as shadow pixels and the image was converted into binary. Curves were generated by the classifier based on results over the shadow removed images. Although this was prone to false positives, most of the lanes were classified as nongrass. Also, grass offers a more uniform patch as compared to lanes as the lane portions in the image vary in brightness and lighting conditions. Lanes also exhibit non-uniform thickness. Hence, both the thresholding and Hough line method would still output false lanes. This would, even more, be the case in thresholding, as it is very difficult to find fine threshold values. So, Random Sample Consensus (RANSAC) was incorporated to detect lanes. On rigorous testing, RANSAC was found to be a reliable technique for curve-fitting. Finally, the image was transformed to a top down view by using an inverse perspective transform (IPT).

This classifier did give decent results, the problems, however, were plenty: the shadow removal didn’t work well, the classifier required a large dataset which we didn’t have and the effort required to manually annotate the image before each run (due to varying lighting conditions).

Blind Colour Decomposition Model

A final year student – Arna Ghosh had worked with the blind color decomposition method (BCD) to analyze histological images for his internship. Though this was used for analyzing stains in microscope images, we thought this could potentially have applications in lane detection as well. So, I and Arna set forth to implement the same.

The method we came up with involved the direct application of BCD with modifications of our own to suit this application. BCD relies on expectation-maximization algorithm applied on the Maxwellian triangle. Hence, the first step was to convert the RGB image of captured by the camera to its Maxwellian triangle.

Since the expectation maximization initialises its parameter estimates by using k-means on the data and then improves the estimates using an iterative process, it is more robust compared to methods based on segmentation using k-means. When we run it on a video stream obtained from the camera, we use estimates obtained from the previous frame as initial estimates for the next frame. Thus, it saves processing time as k-means need not be applied on each frame. For the first frame, the prior is the initial supervision provided to the autonomous system about the road. This method also addresses the problems of gradually changing road conditions using a constant adaptation of the prior knowledge. With every iteration, we update the prior knowledge to move towards the present centroid of the road cluster detected. The rate of movement is controlled using a learning rate.

Segmentation output:

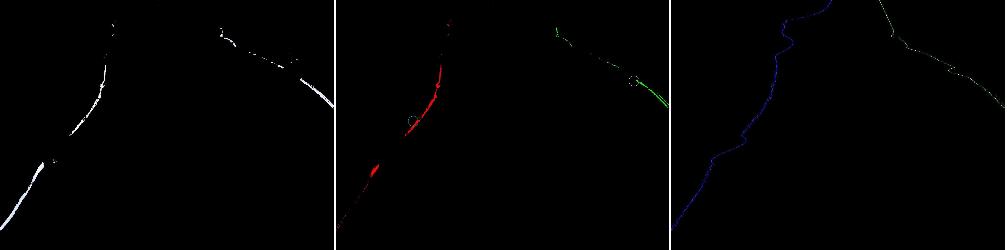

After the road/lanes are segmented from the rest of the image, the next step of the algorithm is to interpolate the data to form continuous outputs – the edges of the road and the lanes respectively.

Convolutional Neural Networks

I recently began experimenting with lane detection using CNNs, mostly because I could overcome my laziness of annotating the ground truth by the availability of a dataset online. The data consists of about 8000 images of size 1200×1200 with the ground truth of the same size. Now, most GPUs today do not have sufficient memory to train a CNN with images of that large a size. So, I first broke down each image into 32×32 windows. After this point, I have tried various approaches but with limited success:

- Use a 3-layer convolutional network (convolution-relu-pool) followed by a two layer fully connected network which is again reshaped to 32×32. I framed this as a regressionproblem with L2 (euclidean) loss.

- Use the same network as above but frame the problem as a classification one (lane vs non-lane binary classification). The loss used was softmax. This gave better results than the previous approach but not close to the ones obtained through SVM and BCD.

- Use the same convolutional network as above, but replace the fully connected layers by deconvolutional layers as use in fully convolutional networks. This is a semantic segmentation problem. I haven’t incorporated the skip-layers yet and the output, thus, was very coarse.

The original image:

The ground truth:

The output of regression network:

So far, these three approaches clearly haven’t been able to improve the existing methods I described above, but I am still working on these and will post updates soon (right now, I am busy with a project on depth-regression – more on that later). However, the interesting takeaway from these experiments is how a single problem, in this case – lane detection, could be framed as a regression, classification, as well as a semantic segmentation problem!

Hello. I see that you don’t update your website too often. I know that writing posts is time consuming and boring.

But did you know that there is a tool that allows you to create new articles using existing

content (from article directories or other pages

from your niche)? And it does it very well. The new articles are unique and pass the copyscape

test. You should try miftolo’s tools